No, not that Bert.

Google introduced BERT or the (Biodirectional Encoder Representations from Transformers) last year. To make life easier (and to save me from typing that whole phrase all the time), we will stick with BERT.

So what is BERT?

BERT was a breakthrough in Google’s research on Transformers (no, not the giant robots from Cybertron). A transformer is a neural network architecture based on self-attention mechanisms. Google believes these to be well suited for language understanding.

Transformers currently outperform convolutional and recurrent models on academic English to German, and English to French benchmarks. It also requires less computation to teach, which speeds up training it.

Google has spent considerable time, money and effort on natural language processing (NLP).

Did I mention that BERT is open sourced? Anyone in the world can train a state-of-the-art question answering system, on a single Cloud TPU in about 30 mins, or a single GPU in a few hours.

Basically, in the easiest terms, BERT processes words in relation to all other words in a sentence. This allows it to understand (or try to), the meaning of a word by looking at not only the words before and after it, but the phrase. This will lead to queries being better understood in turn, giving you a better search result.

Mini BERT Related Rant

Now, anyone I work with tell you that I was a little frustrated when the news about BERT broke. Not because of what it is (I think it could be absolutely game changing). No, what frustrated me was that every blog out there (short of Google’s), omitted a key fact.

All the headlines and blogs were “BERT will now apply to 10% of all searches” (and other variants of that phrase). However, this is not 100% correct.

It will be applied to 10% of all searches in the US only, and only on English language searches.

Google roughly gets 5.6 billion searches per day globally. So, 10% of that would be huge (5 hundred and sixty million), except that’s not the true figure at all.

The main reason that this frustrated me, is that people would read blogs on certain sites (not naming names or people, but we’re talking about big names), and take what they read as gospel. “Oh my god, it’s been rolled out on 10% of all searches, I’ve got loads to learn and roll out on my site.”

I’m all for BERT. I think it actually will change the game (and from examples, already seems to be returning better google search results than before). However, it’s important we don’t omit anything about new tech, even by mistake. If its new, we all have to learn it, and most people will look to certain people in the industry to help guide them.

What does all of this mean for organic search?

Google is applying the model to ranking, as well as featured snippets within search. The goal is to help Search do a better job at helping you find useful information and returning relevant and useful answers to your search queries.

Although it is currently only rolled out to the US for search results, Google have stated that they plan on learning from the improvements BERT makes in English, and then apply them to other languages.

The end goal of all of this is to return relevant results in many languages offered in search.

From a searcher’s point of view, it means you will see more relevant results for your searches.

From a website owner’s point of view, if rolled out fully, it could mean big changes.

What impact should you see to your rankings?

Realistically, unless you are ranking for English queries in the US, probably not much at the moment. This isn’t a full-scale search algorithm update, but more of a bolt on of a machine learning neural network.

However, if you want to see an impact when it rolls out worldwide (which I am confident it will), now is the time to look at your site and see how you can improve.

Is your content conversational? Have you approached it from a question answering perspective? Or is your content thin and lacking any real substance?

Now is the perfect time to address any, or all of these issues. BERT will most likely change keyword research even further too. It is already becoming less and less about specific keywords, and more about topics. If you know a topic, you are probably going to write good content on it, including all the relevant words related to it.

One of the reasons I believe this is such a big thing: Google themselves have said that this is one of the biggest steps forward in Search in the past 5 years.

What about featured Snippets?

This is where I see BERT being truly game changing, in snippets, ‘People also ask’, and voice search (not to be confused with voice assistance). From a snippet perspective, BERT has already been rolled out to two dozen countries and have already seen massive improvements in Korean, Hindi and Portuguese (according to Google).

From a ‘People also ask’ perspective, it is still amazing. BERT is trying to understand language in a more conversational way. We have all learned keyword speak. We all search daily and know how to get the best result by typing as little as possible.

For example, most people would type “Grand Canyon size” to find out the size of the Grand Canyon, rather than typing, “how large is the Grand Canyon”. On the flip side, most of us wouldn’t ask our friends the same question in conversation by saying “Hey Dave, Grand Canyon size?”. You would probably use the second example above, and that is what google is aiming to do – make search more conversational while still understanding what we actually mean.

A few examples

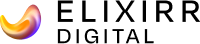

You can already see from the result, that the newer result answers the search query much better than the original SERP. BERT allows Google to understand that “for someone” is actually an important part of the search query, rather than missing the meaning or ignoring it completely first-time round.

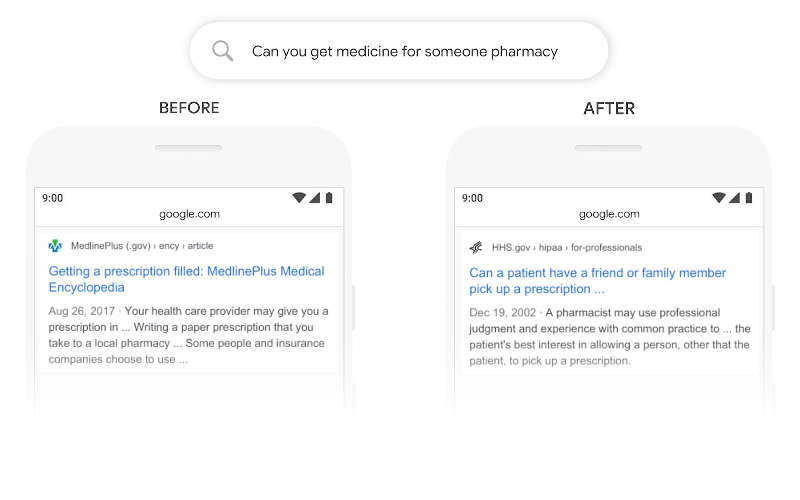

In the past, Google put huge emphasis on the word “curb” but ignored “no”, which is very critical to responding to this query with the best answer.

So, to summarise

BERT has been partially rolled out. In the US, for english queries in the SERP results. It has been rolled out for featured snippets in other countries, and is having an impact.

Understanding the nuances of language is hard (not just for machines). Google admits that BERT may still not get everything right, but they are always trying to improve.